6.3 KiB

Temporal AI Agent

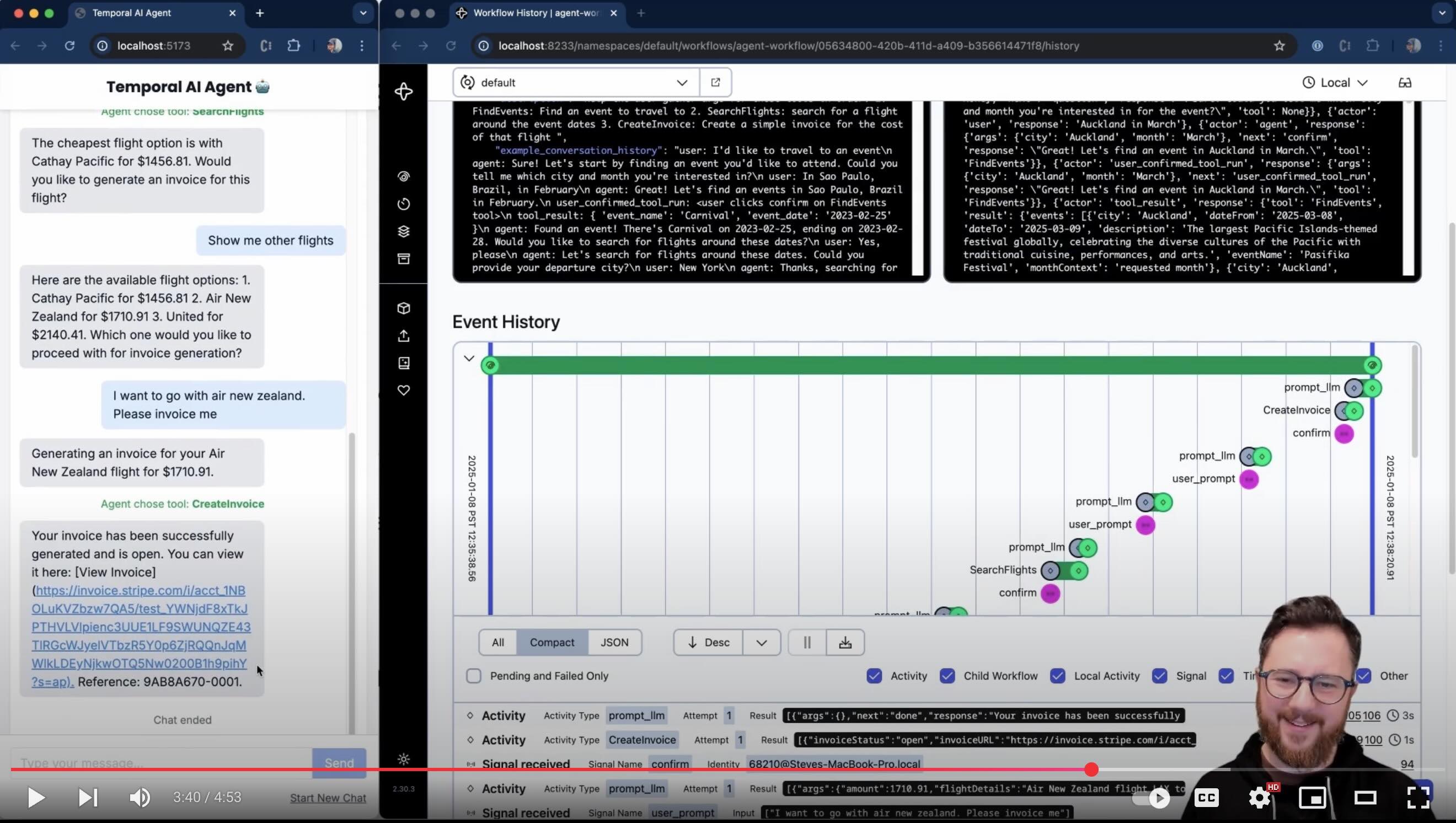

This demo shows a multi-turn conversation with an AI agent running inside a Temporal workflow. The goal is to collect information towards a goal. There's a simple DSL input for collecting information (currently set up to use mock functions to search for events, search for flights around those events, then create a test Stripe invoice for those flights). The AI will respond with clarifications and ask for any missing information to that goal. You can configure it to use ChatGPT 4o, Anthropic Claude, Google Gemini, Deepseek-V3 or a local LLM of your choice using Ollama.

Watch the demo (5 minute YouTube video)

Configuration

This application uses .env files for configuration. Copy the .env.example file to .env and update the values:

cp .env.example .env

LLM Provider Configuration

The agent can use OpenAI's GPT-4o, Google Gemini, Anthropic Claude, or a local LLM via Ollama. Set the LLM_PROVIDER environment variable in your .env file to choose the desired provider:

LLM_PROVIDER=openaifor OpenAI's GPT-4oLLM_PROVIDER=googlefor Google GeminiLLM_PROVIDER=anthropicfor Anthropic ClaudeLLM_PROVIDER=deepseekfor DeepSeek-V3LLM_PROVIDER=ollamafor running LLMs via Ollama (not recommended for this use case)

Option 1: OpenAI

If using OpenAI, ensure you have an OpenAI key for the GPT-4o model. Set this in the OPENAI_API_KEY environment variable in .env.

Option 2: Google Gemini

To use Google Gemini:

- Obtain a Google API key and set it in the

GOOGLE_API_KEYenvironment variable in.env. - Set

LLM_PROVIDER=googlein your.envfile.

Option 3: Anthropic Claude

To use Anthropic:

- Obtain an Anthropic API key and set it in the

ANTHROPIC_API_KEYenvironment variable in.env. - Set

LLM_PROVIDER=anthropicin your.envfile.

Option 4: Deepseek-V3

To use Deepseek-V3:

- Obtain a Deepseek API key and set it in the

DEEPSEEK_API_KEYenvironment variable in.env. - Set

LLM_PROVIDER=deepseekin your.envfile.

Option 5: Local LLM via Ollama (not recommended)

To use a local LLM with Ollama:

-

Install Ollama and the Qwen2.5 14B model.

- Run

ollama run <OLLAMA_MODEL_NAME>to start the model. Note that this model is about 9GB to download. - Example:

ollama run qwen2.5:14b

- Run

-

Set

LLM_PROVIDER=ollamain your.envfile andOLLAMA_MODEL_NAMEto the name of the model you installed.

Note: I found the other (hosted) LLMs to be MUCH more reliable for this use case. However, you can switch to Ollama if desired, and choose a suitably large model if your computer has the resources.

Agent Tools

- Requires a Rapidapi key for sky-scrapper (how we find flights). Set this in the

RAPIDAPI_KEYenvironment variable in .env- It's free to sign up and get a key at RapidAPI

- If you're lazy go to

tools/search_flights.pyand replace theget_flightsfunction with the mocksearch_flights_examplethat exists in the same file.

- Requires a Stripe key for the

create_invoicetool. Set this in theSTRIPE_API_KEYenvironment variable in .env- It's free to sign up and get a key at Stripe

- If you're lazy go to

tools/create_invoice.pyand replace thecreate_invoicefunction with the mockcreate_invoice_examplethat exists in the same file.

Configuring Temporal Connection

By default, this application will connect to a local Temporal server (localhost:7233) in the default namespace, using the agent-task-queue task queue. You can override these settings in your .env file.

Use Temporal Cloud

See .env.example for details on connecting to Temporal Cloud using mTLS or API key authentication.

Use a local Temporal Dev Server

On a Mac

brew install temporal

temporal server start-dev

See the Temporal documentation for other platforms.

Running the Application

Python Backend

Requires Poetry to manage dependencies.

-

python -m venv venv -

source venv/bin/activate -

poetry install

Run the following commands in separate terminal windows:

- Start the Temporal worker:

poetry run python scripts/run_worker.py

- Start the API server:

poetry run uvicorn api.main:app --reload

Access the API at /docs to see the available endpoints.

React UI

Start the frontend:

cd frontend

npm install

npx vite

Access the UI at http://localhost:5173

Customizing the Agent

tool_registry.pycontains the mapping of tool names to tool definitions (so the AI understands how to use them)goal_registry.pycontains descriptions of goals and the tools used to achieve them- The tools themselves are defined in their own files in

/tools - Note the mapping in

tools/__init__.pyto each tool - See main.py where some tool-specific logic is defined (todo, move this to the tool definition)

TODO

- I should prove this out with other tool definitions outside of the event/flight search case (take advantage of my nice DSL).

- Currently hardcoded to the Temporal dev server at localhost:7233. Need to support options incl Temporal Cloud.

- In a prod setting, I would need to ensure that payload data is stored separately (e.g. in S3 or a noSQL db - the claim-check pattern), or otherwise 'garbage collected'. Without these techniques, long conversations will fill up the workflow's conversation history, and start to breach Temporal event history payload limits.

- Continue-as-new shouldn't be a big consideration for this use case (as it would take many conversational turns to trigger). Regardless, I should ensure that it's able to carry the agent state over to the new workflow execution.

- Perhaps the UI should show when the LLM response is being retried (i.e. activity retry attempt because the LLM provided bad output)

- Tests would be nice!